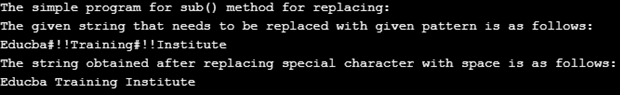

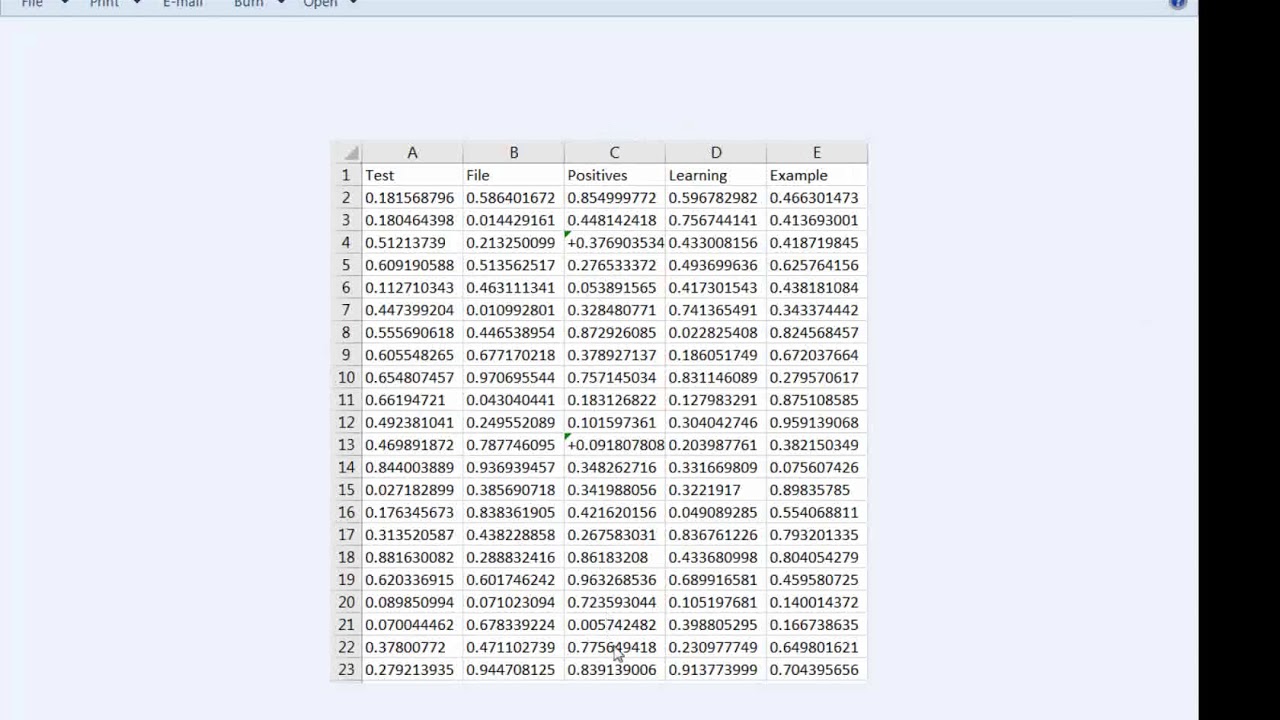

Print(" %s : %.1fms" % (description, time)) Time = timeit.timeit(find(test_word), number=1000) * 1000 Union = trie_regex_from_words(banned_words)įor description, test_word in test_words: Print("\nTrieRegex of %d words" % 10**exp) Return re.compile(r"\b" trie.pattern() r"\b", re.IGNORECASE) ("Surely not a word", "#surely_NöTäWORD_so_regex_engine_can_return_fast"), With open('/usr/share/dict/american-english') as wordbook:īanned_words = Here's a small test (the same as this one): # Encoding: utf-8 The corresponding Regex should match much faster than a simple Regex union.""" The trie can be exported to a Regex pattern. Here's a slightly modified gist, which we can use as a trie.py library: import re foo(bar|baz) would save unneeded information to a capturing group.

foobar|baz would match foobar or baz, but not foobaz.Note that (?:) non-capturing groups are used because: It's a preprocess overkill for 5 words, but it shows promising results for many thousand words. The huge advantage is that to test if zoo matches, the regex engine only needs to compare the first character (it doesn't match), instead of trying the 5 words. Example Īnd then to this regex pattern: r"\bfoo(?:ba|xar|zap?)\b" The resulting trie or regex aren't really human-readable, but they do allow for very fast lookup and match. It's possible to create a Trie with all the banned words and write the corresponding regex. Optimized Regex with TrieĪ simple Regex union approach becomes slow with many banned words, because the regex engine doesn't do a very good job of optimizing the pattern. If you don't care about regex, use this set-based version, which is 2000 times faster than a regex union. For a dataset similar to the OP's, it's approximately 1000 times faster than the accepted answer. Use this method if you want the fastest regex-based solution. Is there a way to using the str.replace method (which I believe is faster), but still requiring that replacements only happen at word boundaries?Īlternatively, is there a way to speed up the re.sub method? I have already improved the speed marginally by skipping over re.sub if the length of my word is > than the length of my sentence, but it's not much of an improvement. This nested loop is processing about 50 sentences per second, which is nice, but it still takes several hours to process all of my sentences. Then I loop through my "sentences": import re I am doing this by pre-compiling my words so that they are flanked by the \b word-boundary metacharacter: compiled_words = So, I have to loop through 750K sentences and perform about 20K replacements, but ONLY if my words are actually "words" and are not part of a larger string of characters. a list of about 20K "words" that I would like to delete from my 750K sentences.a list of about 750K "sentences" (long strings).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed